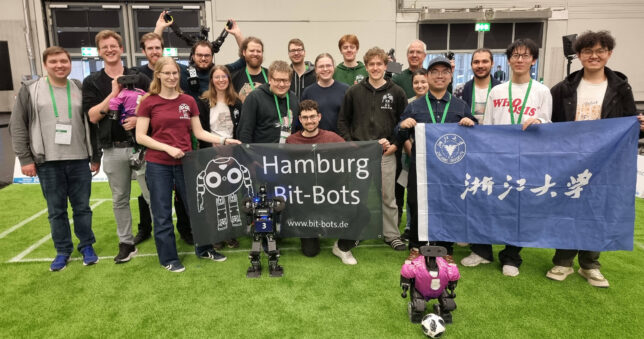

The German Open took place from March 10 to March 14, 2026, in Nuremberg – and of course, the Bit-Bots were there once again. After an intense and exciting tournament, we secured second place and finished as the best German team in the Small Division.

This year, our team size was something special: with a total of 15 members, we brought one of the largest Bit-Bots teams in recent years. Responsibilities were well distributed, and thanks to structured meetings and strong team leadership, collaboration worked smoothly throughout the entire event.

A Rough Start, Strong Progress

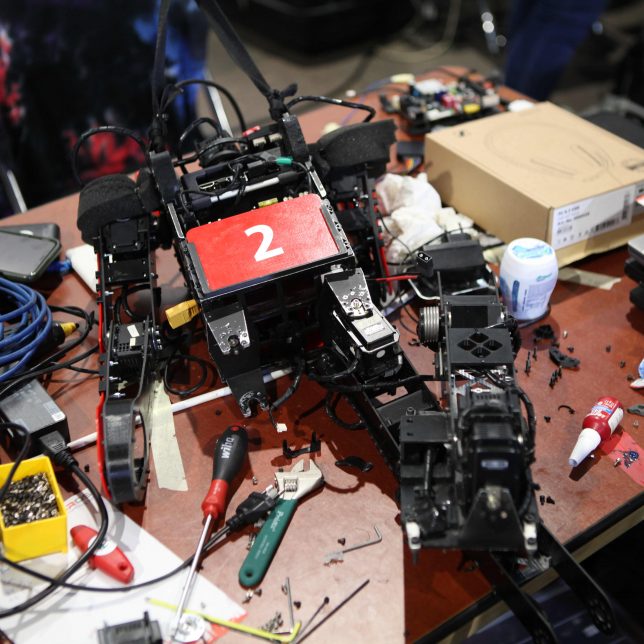

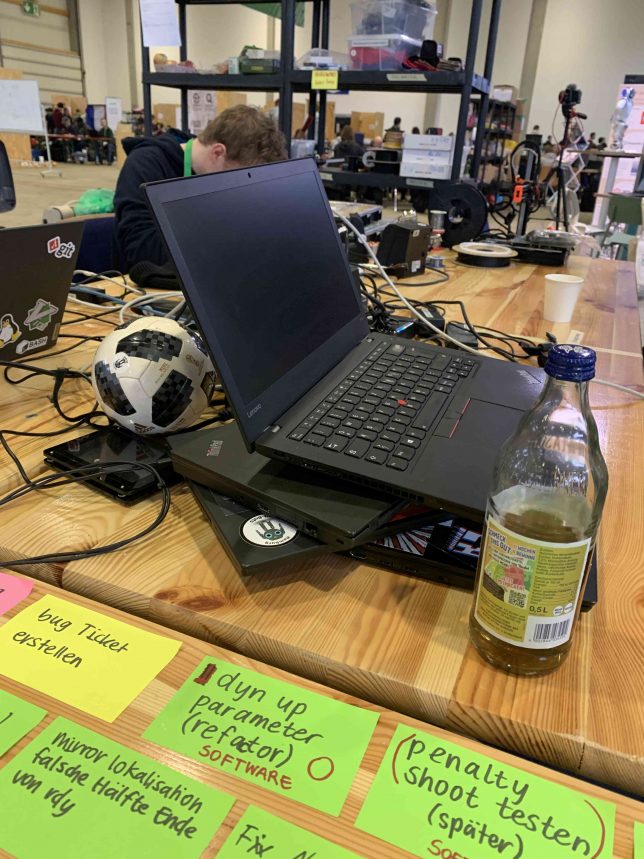

However, the start of the tournament was a bit challenging. Shortly before the competition, we had made some changes to our software, which introduced a few unexpected bugs. As a result, the first matches did not go as planned. But that’s exactly what competitions are for: we were able to identify and fix the issues step by step, improving continuously over the course of the tournament.

Looking Ahead: Our New Robot X02

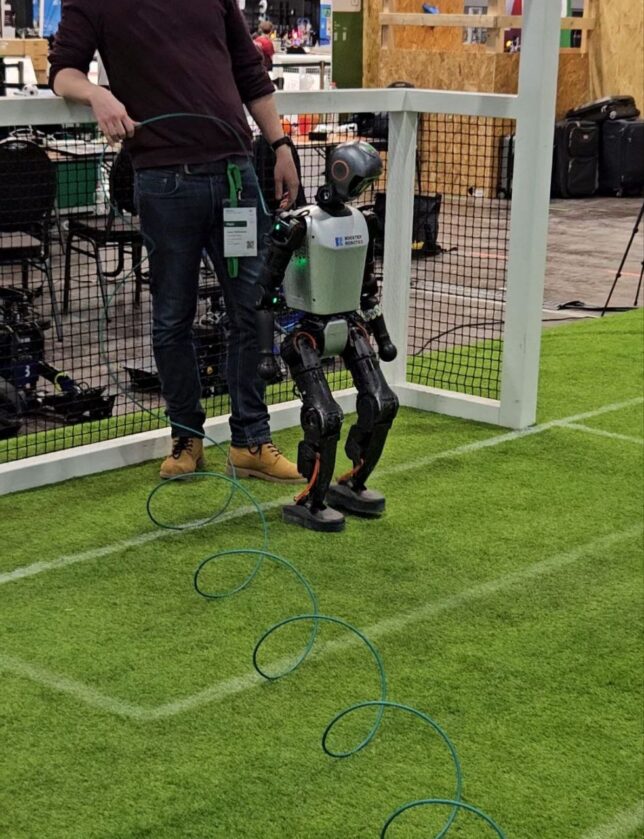

Alongside the matches, we also took the opportunity to test our new robot, X02. Standing about 170 cm tall and weighing around 30 kg, it represents a major step toward large-size robotics. Since we only received it shortly before the competition, competing with it was not yet an option. Nevertheless, we successfully brought key components such as vision, localization, and path planning into operation.

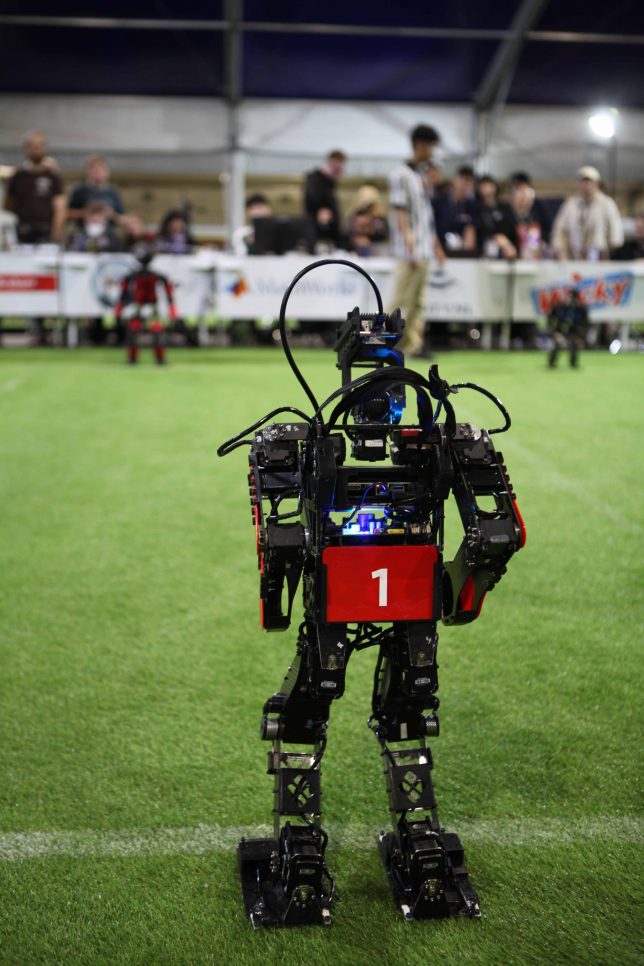

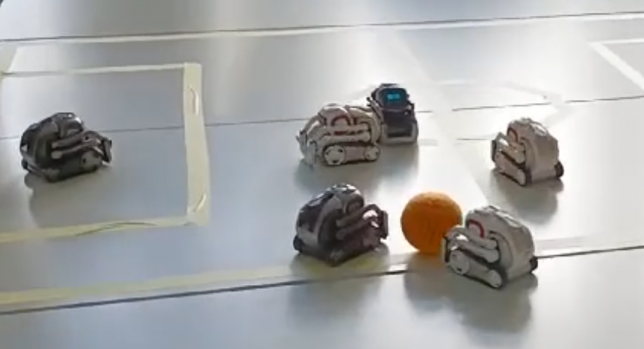

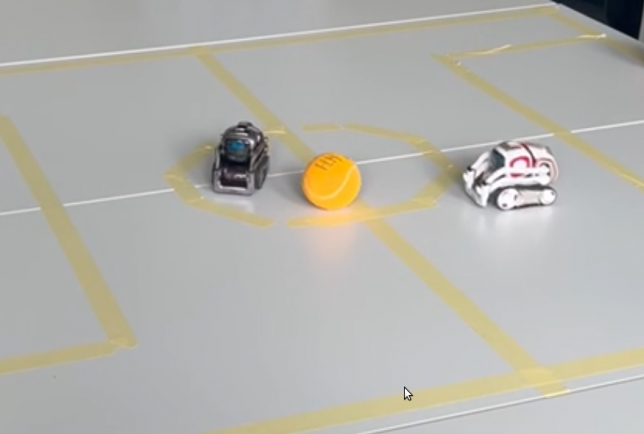

These developments align with broader structural changes in RoboCup: the Humanoid League and the Standard Platform League have been merged into the new Humanoid Soccer League, now divided into three divisions: Small, Middle, and Large. We competed in the Small Division with our “Wolfgang” robots, while X02 is planned to compete in the Large Division in the future.

New League, New Systems, New Challenges

With the new league structure came an updated GameController, which transmits the referees’ decisions to the robots. Especially at the beginning of the tournament, we had to make several adjustments, as quite a few things had changed compared to the previous year.

In this context, we also worked on integrating whistle detection. This provides a clear advantage, particularly during kick-off situations, since the GameController sends the start signal with a slight delay. We were able to implement this feature during the tournament and successfully use it in matches.

At the same time, the league merger brought more variety to the competition: instead of facing only a single team as in the previous year, we now competed against several new opponents.

More Than Just Matches

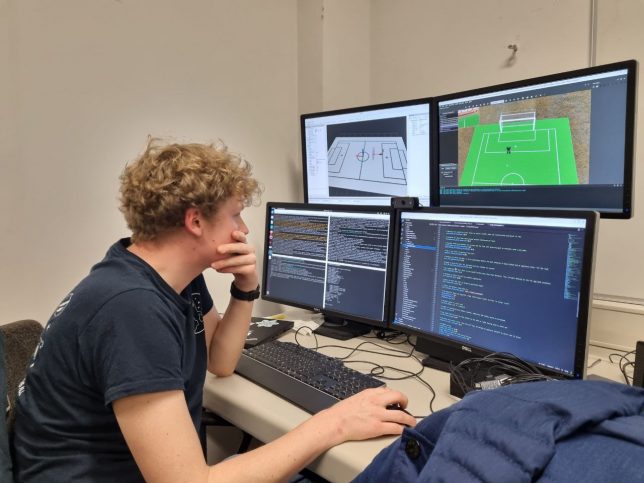

Thanks to our large team, we were able to contribute beyond just playing matches. Some of us took on moderation roles, which meant that our games were featured multiple times in the official livestream: https://www.youtube.com/@robocupgermany/streams. At the same time, other Bit-Bots members worked in parallel on reinforcement learning approaches for the Wolfgang platform.

As always, providing referees was also part of our responsibilities. Our two referees even assisted in a final match and now feel ready to officiate games at the World Championship.

A Strong Final – and Valuable Insights

As is often the case in tournaments, our hardware was no longer in perfect condition by the end—multiple matches inevitably leave their mark. In preparation for the final, we worked intensively on our robots to get the best possible performance out of them.

Our sporting journey ultimately led us to an exciting final, where we faced a Chinese team (ZJU Dancer), which stood out in particular due to significantly more powerful hardware—especially noticeable in their on-field speed. After previously managing a 1:1 draw against them, we were ultimately defeated 1:4 in the final.

Despite the loss, we gained valuable insights: we had the opportunity to take a closer look at our opponent’s hardware and used this chance to gather ideas and even components for our own future development.

Farewell to the “Wolfgangs”

The German Open 2026 also marked a special moment for us: it was the final competition for our “Wolfgang” platform. Over many years, these robots have shaped our team and enabled numerous successes.

Saying goodbye is not easy—but it is a necessary step toward the next generation of robots.

Looking Ahead

Our focus is now firmly on the future: with new hardware and renewed motivation, we aim to compete strongly at the World Championship at the end of June. The experience gained in Nuremberg provides an important foundation for this.

A key step in this preparation is the High-Torque Pi+ Roboter which we were able to borrow until the World Championship. It allows us to work under realistic conditions with more powerful hardware, test new approaches, and directly incorporate the insights we gain into our own development.

In the end, one thing stands out above all: we are proud of our performance, happy with second place—and we had a great time at the German Open.